S. 4159: Sammy’s Law

This bill, known as Sammy's Law, aims to enhance child safety on large social media platforms by introducing specific requirements for these platforms to comply with. Here’s a summary of its main provisions:

Definitions

The bill outlines several key definitions, including:

- Child: An individual under 17 who has an account on a large social media platform.

- Large Social Media Platform: A service with over 100 million active users monthly or over $1 billion in annual revenue, allowing users to share content with others.

- Third-Party Safety Software Provider: A company authorized to manage a child's online interactions and settings for safety purposes, with permission from the child or their parent.

Access and Permissions

Large social media platforms must create and maintain real-time application programming interfaces (APIs) allowing parents or children (under 13, with parental consent) to delegate permissions to third-party safety software. This enables the software to:

- Manage online interactions and content created by or sent to the child.

- Transfer user data to the third-party provider at least once an hour.

Data Security and Privacy

Social media platforms are required to establish reasonable security measures to protect user data transferred to third-party providers. They must:

- Safeguard the confidentiality and integrity of this data.

- Notify children or parents about the data being transferred and provide summaries of user data shared with third-party providers.

Operational Requirements for Third-Party Providers

Third-party safety software providers must:

- Limit data collection to what is necessary for child protection.

- Establish and maintain security practices to protect user data received.

- Register with the Federal Trade Commission (FTC) and demonstrate compliance with specific conditions, including the prohibition of selling user data.

Compliance and Enforcement

The FTC is tasked with enforcing the law and will conduct biannual compliance assessments of social media platforms. They can take action against providers that fail to comply with the law. Any violations will be treated as unfair or deceptive practices.

National Standard

The bill establishes that no state can implement additional regulations regarding the requirements for social media platforms concerning child safety APIs, ensuring a uniform standard across the nation.

Limitation of Liability

The bill includes provisions that protect social media platforms from civil liability if they have acted in good faith in compliance with the law when transferring user data to third-party safety providers.

User Data Disclosure

Third-party providers may disclose user data only in specific circumstances, such as:

- Legal requests from government bodies.

- To a child or parent about a specific child’s data.

- When a serious threat to health or safety is identified.

Relevant Companies

- FB (Meta Platforms, Inc.): As a large social media platform, they will need to comply with the new requirements regarding permission APIs and data security measures.

- GOOGL (Alphabet Inc.): Google's platforms, including YouTube, will need to adjust their API accessibility and user data management in accordance with the bill.

- SNAP (Snap Inc.): As a social media service popular among younger users, Snap will be directly affected by the new regulations aimed at child safety and data management.

This is an AI-generated summary of the bill text. There may be mistakes.

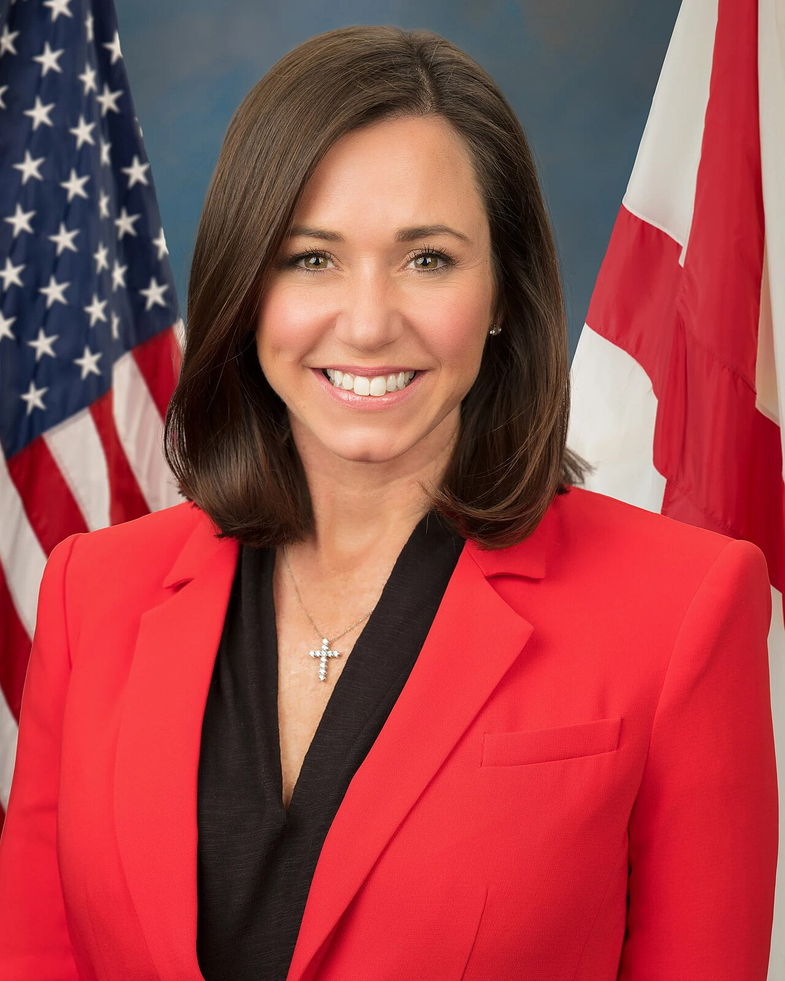

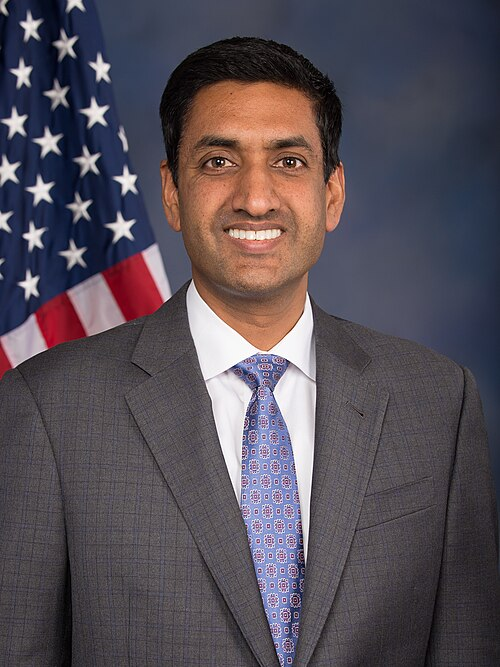

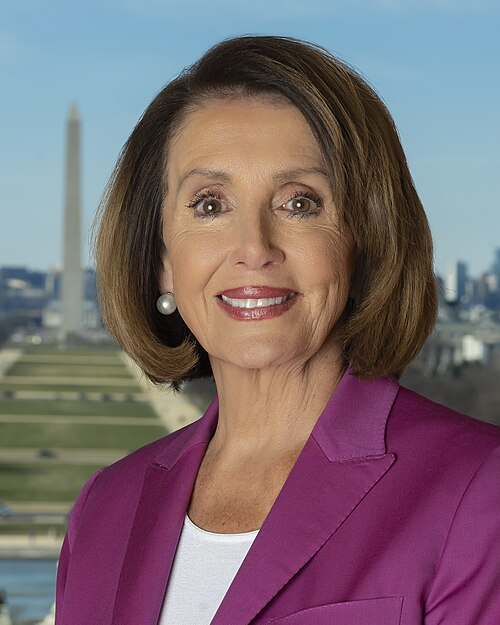

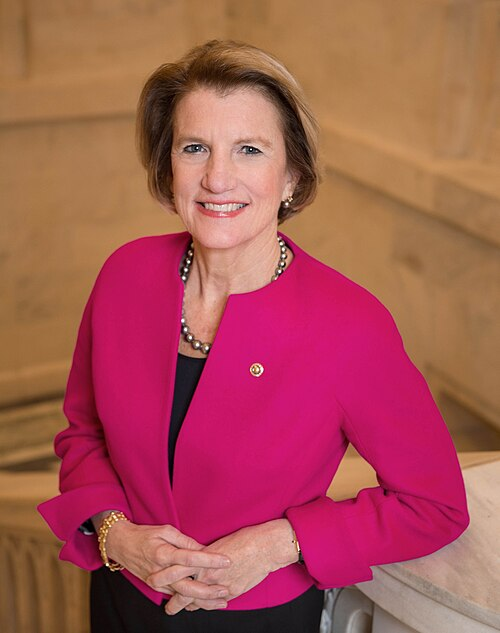

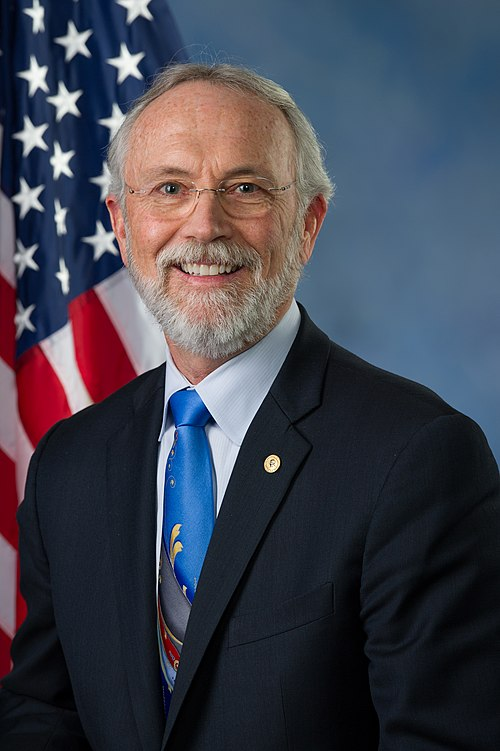

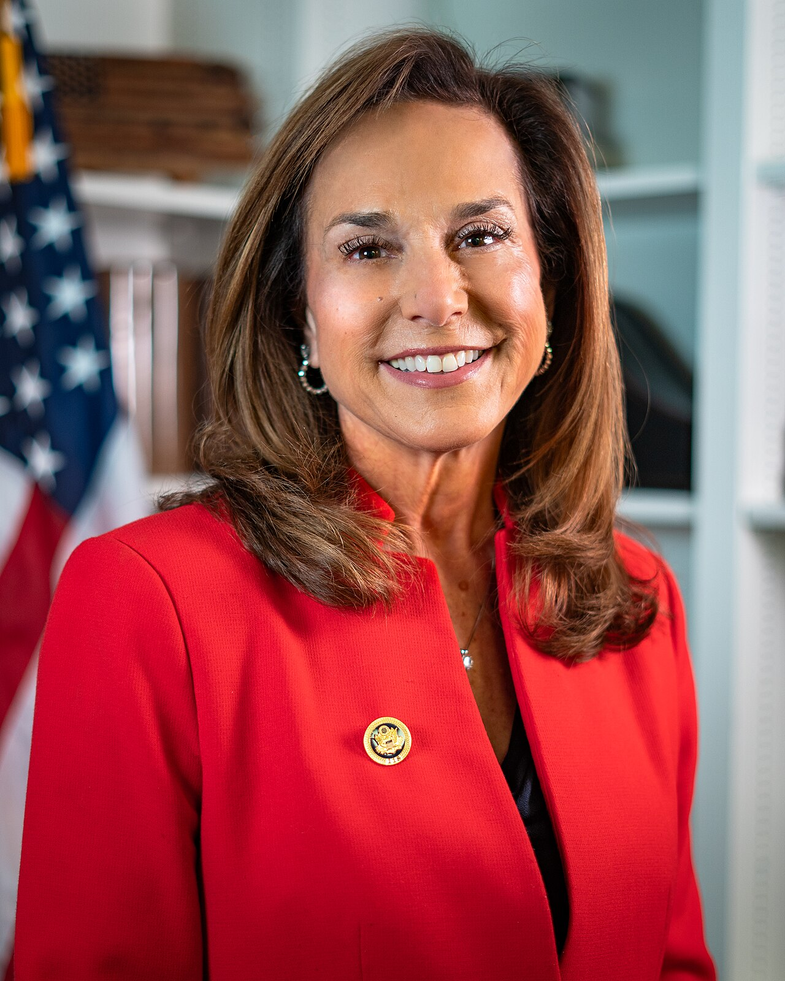

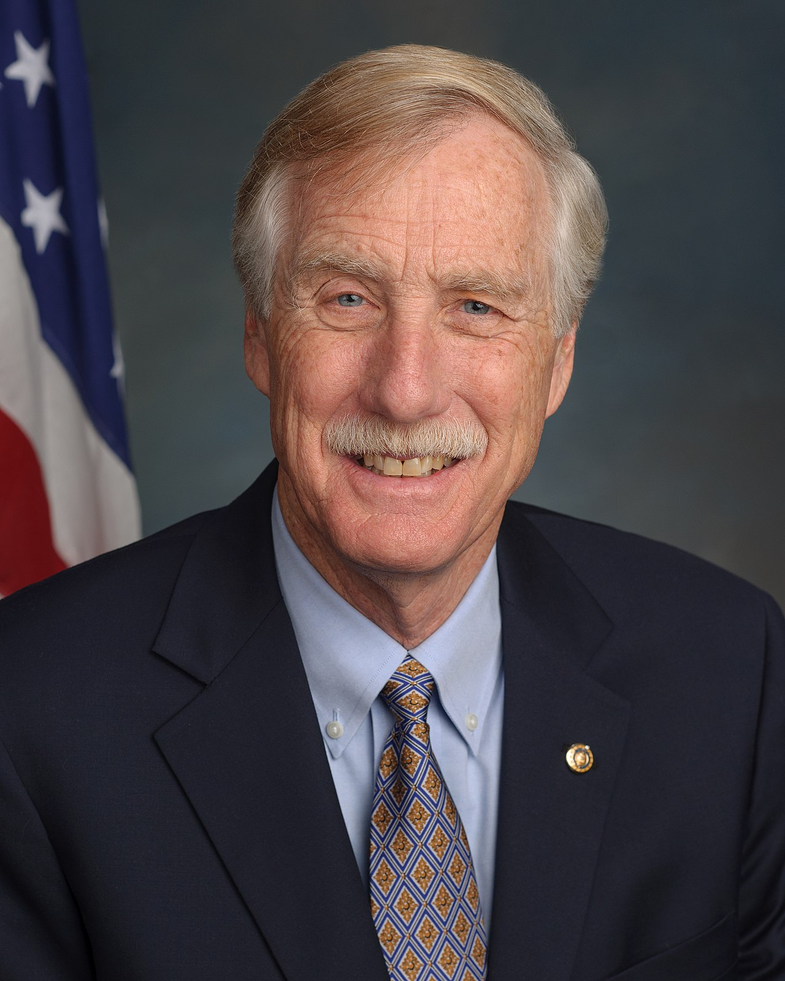

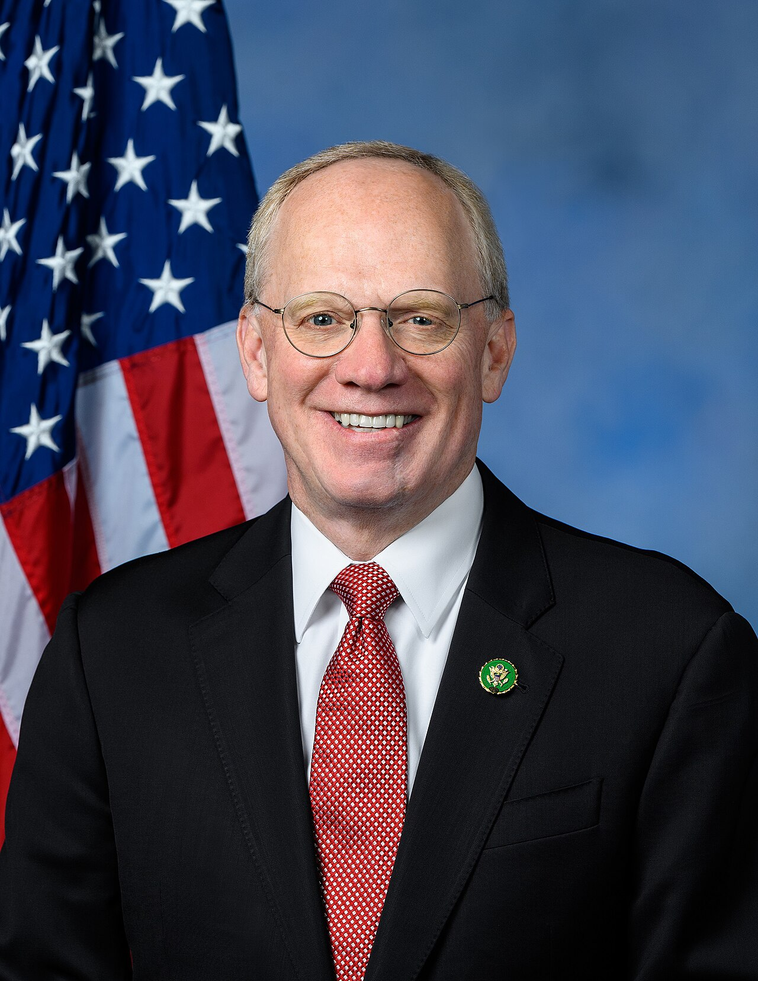

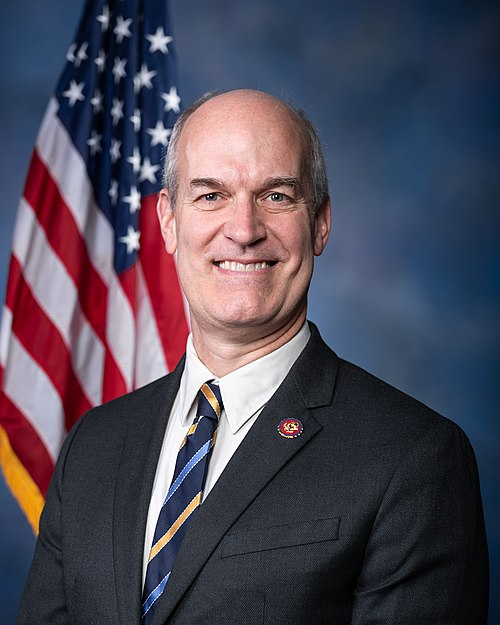

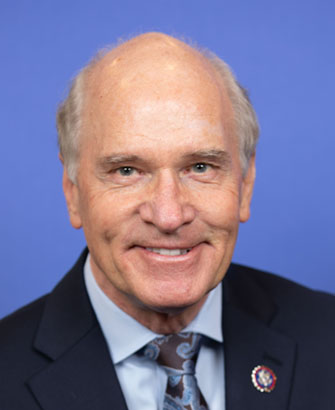

Sponsors

3 bill sponsors

Actions

2 actions

| Date | Action |

|---|---|

| Mar. 20, 2026 | Introduced in Senate |

| Mar. 20, 2026 | Read twice and referred to the Committee on Commerce, Science, and Transportation. |

Corporate Lobbying

0 companies lobbying

None found.

* Note that there can be significant delays in lobbying disclosures, and our data may be incomplete.